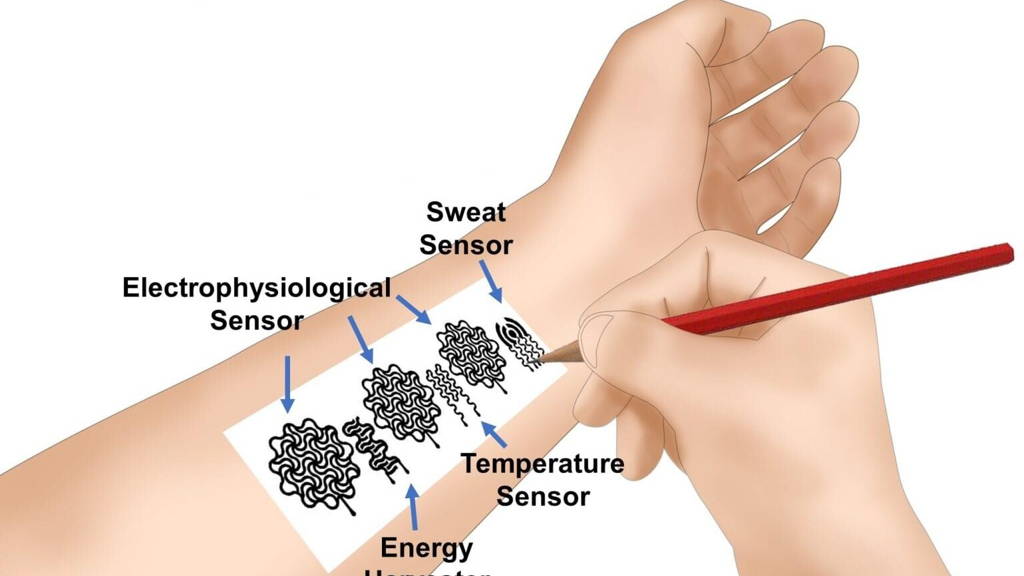

Drawing Sensors on Our Skin

One day, instead of buying electronic devices for monitoring health, we will be drawing them on our skin. That is, at least, according to new research carried out by engineers from the University of Missouri. They presented a simple combination of paper and pencil that we can use to create different sensors without help. The results of the research were published in the Proceedings of the National Academy of Sciences. Many existing commercial biomedical devices used on the skin often feature two key elements – a biomedical measuring component and an elastic material wrapped around it, such as plastic, to secure the supporting structure for the component and to maintain the skin-to-body connection. They can be replaced easily.

During the research, scientists discovered that pencils containing over 90% graphite (optimally 93%) could conduct a necessary amount of energy produced from the friction occurring between paper and a pencil while drawing or writing. All we need to create different bioelectronic devices for our skin is plain office printer paper. To secure the paper on the skin and to make sure it stays there, we need a biocompatible spray-on adhesive. This discovery can be widely used at home for personalized health care, education, and scientific research. "For example, if a person has a sleep issue, we could draw a biomedical device that could help monitor that person's sleep levels," explains Zheng Yan, assistant professor at the College of Engineering. Another advantage is the fact that the paper can decompose within one week, which is enough to gather a sufficient amount of data.

Source: University of Missouri

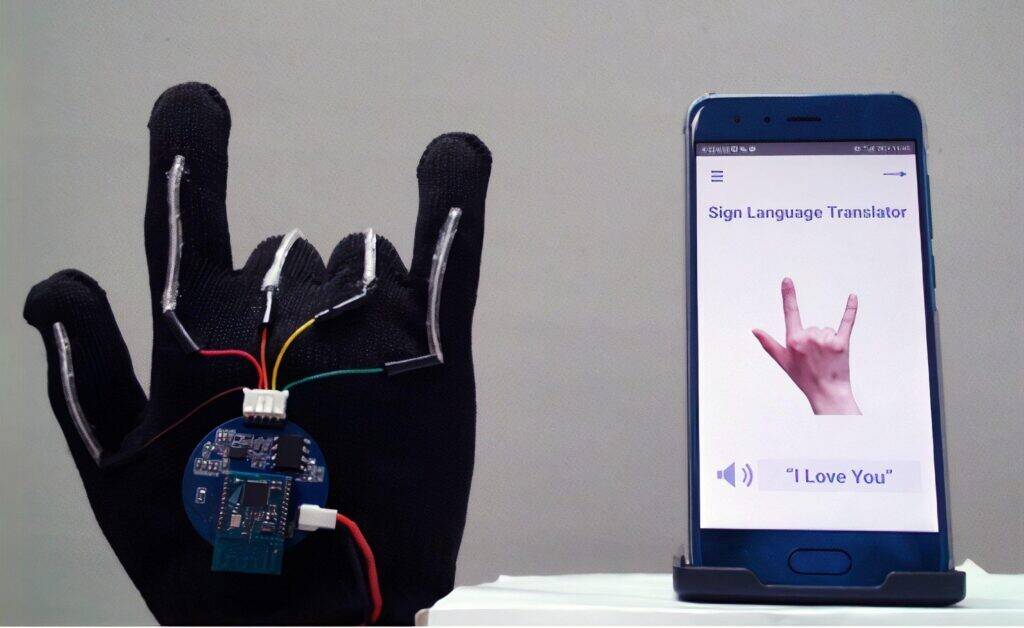

A Glove That Translates Sign Language

Bioengineers from the University of California, Los Angeles (UCLA), designed a device in the form of a glove that translates American Sign Language to English in real-time, using a mobile app.

The result of their research was published in Nature Electronics. Each glove is made of thin, stretchable sensors that run along each of the five fingers. The sensors are made from electrically conducting yarns. By monitoring hand motions and finger placements, the sensors can recognize individual letters, digits, words, and phrases. This device transforms movements into electrical signals, which are then transferred to a coin-sized circuit board and sent wirelessly to a smartphone. A designed system converts them into speech at the rate of about one word per second. The researchers also placed sensors on the testers' faces - between the eyebrows and on one side of the mouth - to capture facial expressions that are a part of American Sign Language.

Source: UCLA

Robotic Guide for Visually Impaired People

A student from Loughborough University (UK) designed an autonomous way-finding device for blind and visually impaired people who do not have a guide dog. There are 253 million visually impaired people in the world. Only a small number of them use guide dogs. Others have to rely on white canes and spatial memory.

Anthony Camu, a final-year student of Industrial Design and Technology, designed a product that mimics the functions of a guide dog. Inspired by video game consoles that use virtual reality and autonomous vehicles, he created "Theia" – a mobile device that can lead its users in open and large indoor spaces. A blind or visually impaired person can give audio instructions, such as: "Take me to the closest park." As a device using the Internet Of Things, Theia can process online data in real-time, such as traffic (pedestrians and vehicles) or the weather, to safely lead the users to their destination. In the case of high-risk places, such as zebra crossings or busy intersections, the device switches to a manual mode. Theia will be crammed with teledetection sensors and cameras to capture 3D images of the surroundings.

Its ergonomic handle can transfer complicated walking maneuvers by moving the users' hands, using a new form of force feedback, which involves a control moment gyroscope (CMG). Theia users would be able to feel all the subtleties of leading, such as speed, direction, and a feeling of "pulling" similar to that of holding the leash of a guide dog. The first model faced many problems, such as breaking motors and excessive vibrations; however, Anthony hopes to create better prototypes by working with other engineers.

"The goal of many non-sighted people is to be independent and live a normal life but unfortunately, many who endure vision loss feel excluded from situations and activities which many people take for granted, such as socializing, shopping or going to restaurants." Such limitations are usually formed due to the fear and anxiety associated with having a partial understanding of the surroundings. Theia has the capacity to expand a blind person's comfort zones," says Anthony Camu.

Source: Loughborough University

3D Bone Scaffolding

Researchers from the Oregon Health & Science University (OHSU) have developed a small, 3D Logo-inspired brick that can help treat broken bones and, in the future, create human organs in laboratories. The hollow bricks create a system of scaffolding where both hard and soft tissue can regrow. Moreover, they enable the tissue to regrow faster, compared to the currently used regeneration methods – according to the research published in Advanced Materials. Each wall is made up of cubic bricks measuring 1.5 millimeters. This is roughly the size of a flea. The scaffolding is easy to use and can be used to form bigger structures, like Lego, in different configurations and by matching them one-to-one. An advantage of the new scaffolding system is the ability to fill it with a gel containing different growth factors, which speed up the repair of bone structures and blood vessels.

Source: Oregon Health & Science University (OHSU)